PCI DSS is the Payment Card Industry Data Security Standard, and this is a worldwide standard that was set up to help businesses process card payments securely and reduce card fraud. The way it does this is through tight controls surrounding the storage, transmission and processing of card holder data that businesses handle. PCI DSS is intended to protect sensitive card holder data. It is mandatory for all businesses who accept card payments to comply by getting a PCI certificate. This applies to all types of card payments through online, by mail, over the phone or using card machines.

As most organizations are finding, implementing PCI-DSS regulations and becoming compliant is very costly. We intend to bring those costs down by giving you a guideline on how to use FREE tools and resources in order to make compliance less painful.

System Update:

The first thing that you should do on any Linux distro is run the necessary system updates. When your system is up to date, your server will operate in accordance to the top industry standards.

For RHEL server:

[cc]# yum update[/cc]

For Debian servers:

[cc]# apt-get update[/cc]

Linux Systems and PCI DSS Requirements:

For Linux systems there are several sections apply to the PCI DSS standards and requirements that we will be going to discuss in the this article.

1) Maintaining your Secure Network:

Systems are linked together via internal or external networks, like the internet. PCI DSS recognizes the fact that network connectivity should be properly protected, especially from untrusted network segments. While most of PCI DSS requirement 1 applies to network components that applies to Linux systems that included the installation and maintenance of system firewall to protect data.

You can run the following command to check the status of iptables modules in the Linux Kernel.

[cc]# lsmod | grep table

ip_tables 27239 0

[/cc]

On Linux systems firewall is usually managed through iptables with running configurations stored on disk. A good example of this is dynamic blacklist, which gets filled with an external tool (like fail2ban). In CentOS 7 iptables service is now replaced with firewalld service.

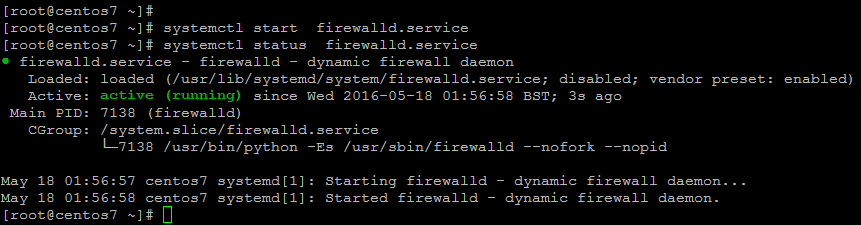

To start its service use below command on Linux CentOS 7.

[cc]# systemctl start firewalld.service[/cc]

The Networks requirement states to identify the insecure services and should be disabled their related service protocols where possible. The ‘telnet’ protocol and the “r” services like rexec, rlogin, rcp, and rsh, are insecure that should not be used. While some services include limitation of its access, like using access control lists of IP filtering methods. It might make the service still being acceptable in some environments. Where possible, insecure services should be replaced with a more secure alternative. For example, all Linux distributions use now SSH by default for remote administration.

Following are few examples of commonly used unencrypted protocols and their secure alternatives.

-

- Telnet –> (SSH, Mosh)

-

- FTP –> (FTPS, SFTP, SCP)

-

- HTTP –>(HTTPS)

-

- IMAP –> (IMAPS)

-

- POP3 –> (POP3S)

-

- SNMP –> v1/v2 (SNMP v3)

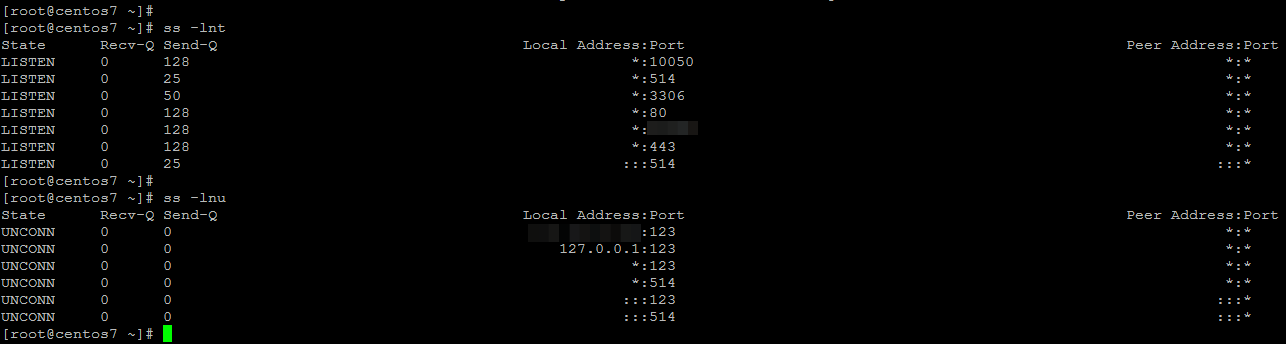

To check what protocols are enabled on a system, the netstat or ss utility can be used as shown.

[cc]# netstat -nlp[/cc]

[cc]

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:10051 0.0.0.0:* LISTEN 1214/zabbix

tcp 0 0 0.0.0.0:514 0.0.0.0:* LISTEN 728/rsyslogd

tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN 2221/mysqld

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 1208/httpd

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 1199/sshd

tcp 0 0 0.0.0.0:443 0.0.0.0:* LISTEN 1208/httpd

tcp6 0 0 :::514 :::* LISTEN 728/rsyslogd

[/cc]

[cc]# ss -lnt[/cc]

[cc]# ss -lnu[/cc]

2) Using your own security parameters

Do not use vendor-supplied defaults for system passwords and other security parameters. Always change vendor-supplied defaults before installing a system on the network—for example, include passwords, simple network management protocol (SNMP) community strings, and elimination of unnecessary accounts.

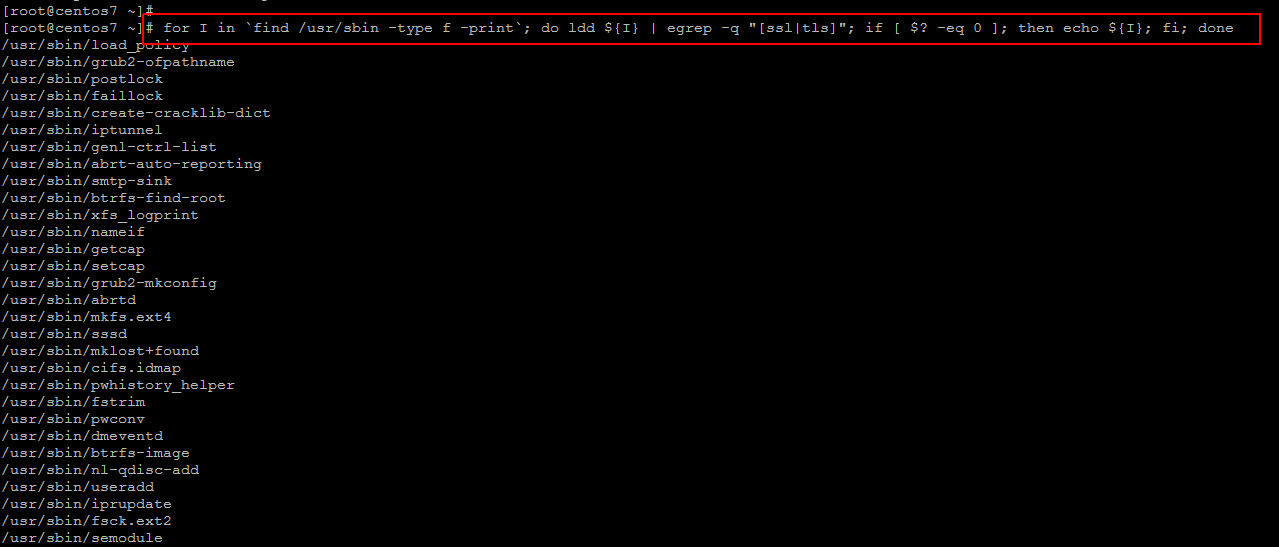

Collect the list of installed packages and running processes and search for known packages and processes which have SSL/TLS used. If you really want to cover every part of the system, consider analyzing the available binaries on the system. Those binaries using SSL/TLS libraries, might need specific configuration to disable older protocols.

[cc]# for I in `find /usr/sbin -type f -print`; do ldd ${I} | egrep -q “[ssl|tls]”; if [ $? -eq 0 ]; then echo ${I}; fi; done[/cc]

There are several protocols that have been proofed to be too weak for proper protection of sensitive data which resulted in the POODLE attack, which made effectively SSLv3 a protocol which should be banned. Because of its usage in web services, PCI section 2.2.3 specifically states that SSLv3 and early TLS versions should no longer be used.

Do the following configuration changes on your web server’s configurations.

Apache Webserver:

Disable SSLv2 and SSLv3 on Apache installations by adding the ‘SSLProtocol’ option, specifying which protocols NOT to use in their related configuration file depends on the Linux distribution.

For RHEL/CentOS:

[cc]# vim /etc/httpd/conf/httpd.conf[/cc]

[cc]SSLProtocol all -SSLv2 -SSLv3[/cc]

For Debian:

[cc]# vim /etc/apache2/httpd.conf[/cc]

[cc]SSLProtocol all -SSLv2 -SSLv3[/cc]

Dovecot Conf:

[cc]ssl_protocols = !SSLv2 !SSLv3[/cc]

Nginx Conf:

Add this to server configuration file and apply it to the http context.

[cc] #vim /etc/nginx/nginx.conf [/cc]

[cc]ssl_protocols TLSv1 TLSv1.1 TLSv1.2;[/cc]

Postfix Conf:

For the configuration of Postfix, the protocols should specifically be blocked by using an exclamation sign.

[cc]

smtpd_tls_mandatory_protocols=!SSLv2,!SSLv3

smtp_tls_mandatory_protocols=!SSLv2,!SSLv3

smtpd_tls_protocols=!SSLv2,!SSLv3

smtp_tls_protocols=!SSLv2,!SSLv3

[/cc]

3) Maintain a Vulnerability Management Program:

Use and regularly update anti-virus software so that Systems should be protected against malicious software components, known as malware.

Deploy anti-virus software on all systems commonly affected by malicious software (particularly personal computers and servers).

Following are the Malware checklist for PCI DSS and Linux.

1- Regular scans via cronjob

2- Proper logging (preferable to syslog)

3- Up-to-date virus definitions

Following are some Open Source Tools that can be used on Linux servers.

ClamAV:

One of the most commonly used malware scanners on Linux, is ClamAV. Like any other anti-virus/malware solution, it should be kept up-to-date.

Linux Malware Detect (LMD):

Another great addition to ClamAV is using LMD or Linux Malware Detect. It is released under GPLv2 and can actually leverage the scanning power of ClamAV. It can use the notify functionality of Linux, to scan new and modified files.

4) Develop and maintain secure systems and applications:

Develop software applications in accordance with PCI DSS (for example, secure authentication and logging) and based on industry best practices, and incorporate information security throughout the software development life cycle.

Testing of all security patches, and system and software configuration changes before deployment. Ensure that all system components and software have the latest vendor-supplied security patches installed. Install critical security patches within one month of release.

5) Implement Strong Access Control Measures:

To ensure that critical data can only be accessed by authorized personnel, systems and processes must be in place to limit access based on need to know and according to job responsibilities.

Assigning a unique identification (ID) to each person with access ensures that each individual is uniquely accountable for his or her actions. When such accountability is in place, actions taken on critical data and systems are performed by, and can be traced to, known and authorized users. Limit access to system components and cardholders data to only those individuals whose job requires such access.

Authentication Factors:

There are several controls which highlight the need for proper access, protection, and logging changes. This includes system configuration files (e.g. to PAM or SSH), but also to the logging itself. In other words, we should take appropriate measures to safeguard these configurations itself as well.

Inactive accounts:

Inactive accounts on the system might be an unneeded security risk. This kind of accounts usually exists because there was a one-time need to log in, or simply forgotten after an employee left the company.

To determine the last time a user logged in, the last command can be used. Information is stored in /var/log/wtmp.

Linux PAM :

Pluggable Authentication Modules (PAM) are used to properly arrange the ways users can present themselves, and proof they have a need to access the system. The PCI DSS standard does not describe how PAM should be configured, but it gives several pointers regarding:

- Password history

- Password strength

- Password lockout

The PAM configuration differs between Linux distributions, so it is important to make the right changes, and to the right files.

File permissions of password files in linux Ubuntu should be like below.

[cc] /etc/passwd (644, owner root, group root)

/etc/shadow (640, owner root, group shadow)

[/cc]

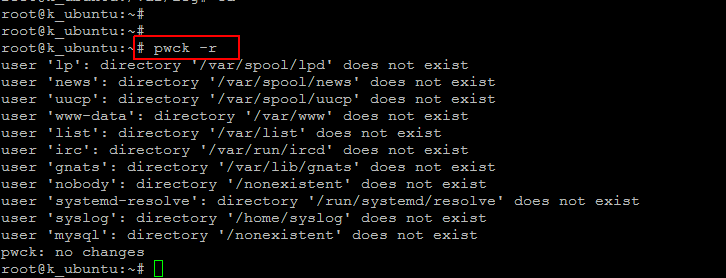

A very quick and powerful method to test both /etc/passwd and /etc/shadow is by using the pwck utility that covers a wide variety of tests.

-

- – a unique and valid user name

-

- – a valid user and group identifier

-

- – a valid primary group

-

- – a valid home directory

-

- – a valid login shell

-

- – every passwd entry has a matching shadow entry

-

- – every shadow entry has a matching passwd entry

-

- – passwords are specified in the shadowed file

-

- – shadow entries have the correct number of fields

-

- – shadow entries are unique in shadow

- – the last password changes are not in the future

run the command below to test.

[cc]# pwck -r [/cc]

This will show the results of each of the mentioned tests like missing home directories will be shown as well.

6) Regularly Monitor and Test Networks:

Track and monitor all access to network resources and cardholders data and regularly test security systems and processes. Logging mechanisms and the ability to track user activities are critical in preventing, detecting, or minimizing the impact of a data compromise. The presence of logs in all environments allows thorough tracking, alerting, and analysis when something does go wrong. Determining the cause of a compromise is very difficult without system activity logs.

Normal Logging:

The most common type of normal logging on Linux is by using syslog. It is still a popular way to store information, varying from boot information, up to kernel events and software related information.

For Linux logging it is important to check the “health” of the logging configuration itself. This determines what happens with events, like which events to capture and what to ignore. there are following two main points to be considered for logging.

Rotation:

Proper log rotation should be in place, without destroying previously stored data depending on number of transactions and the level of details stored.

Systemd:

the latest Linux systems are using ‘systemd’ as their service manager. These systems will also be using journald, a journal logging utility. It is not yet a full replacement, as some information will be stored in both, where other information is only available in one of the two. When setting up (and auditing) a system with systemd, ensure to check the configuration of both syslog and journald.

Linux System Audit for PCI DSS compliance :

Auditd allows us to monitor two types of staff, system calls and files. With files everything is pretty much clear. However the syscalls are the main challenge.

Besides logging, the system can collect audit events. Systems running Linux usually have support for the audit framework enabled.

Here are some of our Audit system parameters that we have used to define for loging.

[cc]vim /etc/audit/audit.rules[/cc]

#To exclude excessive messages

-a exclude,always -F msgtype=CWD

#To log all root and sudo commands

-a exit,always -S all -F euid=0 -F perm=wxa -k root

#Monitoring full directory logs

-a always,exit -S all -F dir=/var/log/audit -F perm=wra -k audit-logs

-w /var/log/auth.log -p wra -k logs

-w /var/log/syslog -p wra -k logs

#Invalid Logical Access

-a always,exit -F arch=b64 -S all -F exit=-13 -k access

#Creation and deletion of system level objects.

-a always,exit -S all -F dir=/etc -F perm=wa -k system

-a always,exit -S all -F dir=/boot -F perm=wa -k system

-a always,exit -S all -F dir=/usr/lib -F perm=wa -k system

-a always,exit -S all -F dir=/bin -F perm=wa -k system

-a always,exit -S all -F dir=/lib -F perm=wa -k system

-a always,exit -S all -F dir=/lib64 -F perm=wa -k system

-a always,exit -S all -F dir=/sbin -F perm=wa -k system

-a always,exit -S all -F dir=/usr/bin -F perm=wa -k system

-a always,exit -S all -F dir=/usr/sbin -F perm=wa -k system

The audit framework is powerful for debugging and troubleshooting issues on your system. Additionally it is of great help in detecting unauthorized events or system intrusion.

With these rules you should be able to get the Linux audit framework up and running.

Accounting Information:

Another category of data to store is accounting details. This might be used for billing, troubleshooting or for further processing later. Accounting details are usually stored for actions performed by users, like running a particular process, or the connect time to a system. You should ensure that accounting details are equally treated as logging and audit information that can provide a valuable resource during investigations like troubleshooting or incident response on Linux.

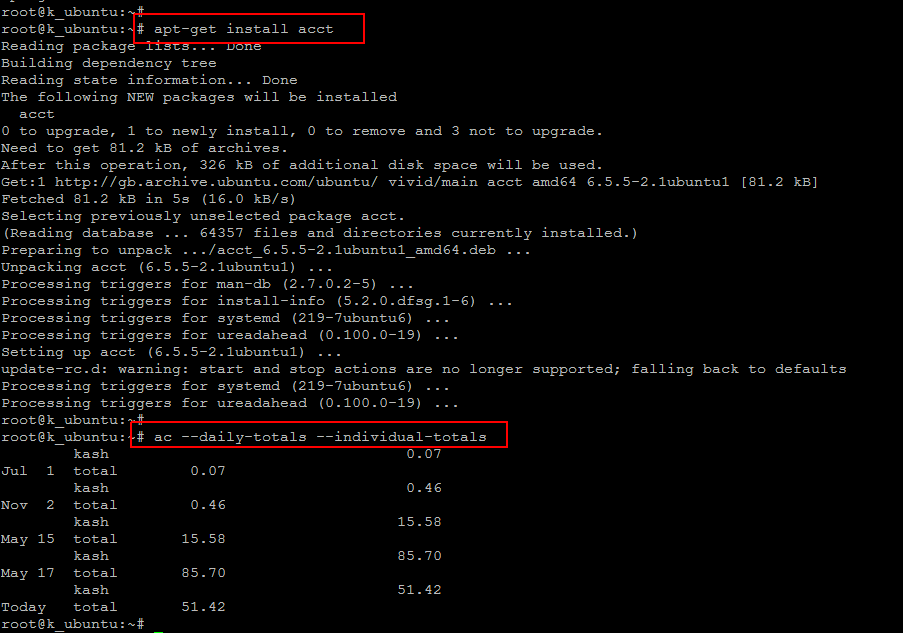

Run the below command to install ‘ac’ utility and then to check the status users connect time.

[cc]# apt-get install acct [/cc]

[cc]# ac –daily-totals –individual-totals[/cc]

Conclusion:

Being compliant with PCI DSS means that you are doing your best to keep your customers valuable information safe and secure and out of the hands of people who could use that data in a fraudulent way. Not holding on to data reduces the risk that your customers will be affected by fraud. Make sure that you are and remain complaint with the PCI DSS Requirements and change your default passwords and settings – when you install / implement any new piece of hardware or software and then change all passwords once every three months. Look out for suspicious activity – check any unauthorized access to your systems, failed login attempts or out of hours activity. Limit the number of log-in attempts so that the system is locked down once the threshold has been reached. Remove user accounts that are no longer being used. That’s it, your Linux system will be all OK after following this guide, I hope you enjoyed reading.