Introduction

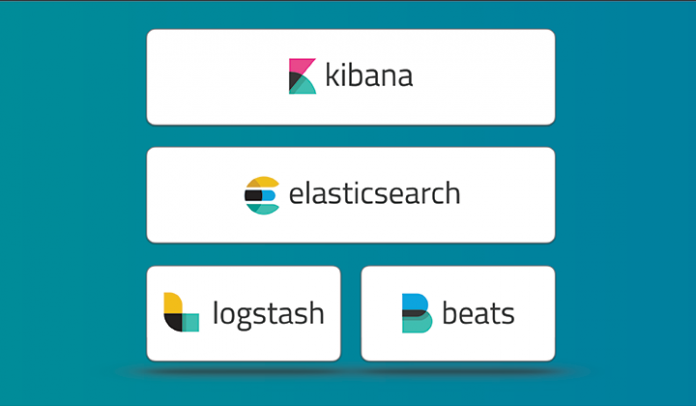

For those who don’t know, Elastic Stack (ELK Stack) is an infrastructure software program made up of multiple components developed by Elastic. The components include:

- Beats: open-source data shippers working as agents on the servers to send different types of operational data to Elasticsearch.

- Elasticsearch: a highly scalable open source full-text search and analytics engine. It allows you to store, search, and analyze big volumes of data quickly and in near real time. It is generally used as the underlying engine/technology that powers applications that have complex search features and requirements.

- Kibana: open source analytics and visualization platform designed to work with Elasticsearch. It is used to interact with data stored in Elasticsearch indices. It has a browser-based interface that enables quick creation and sharing of dynamic dashboards that display changes to Elasticsearch queries in real time.

- Logstash: logs and events collection engine, which provides a real-time pipeline. It can take data from multiple sources and convert them into JSON documents.

This tutorial will take you through the process of installing the Elastic Stack on a CentOS 7 server.

Getting started

First of all, we need Java 8, so you’ll need to download the official Oracle rpm package.

# wget --no-cookies --no-check-certificate --header "Cookie: gpw_e24=http:%2F%2Fwww.oracle.com%2F; oraclelicense=accept-securebackup-cookie" "http://download.oracle.com/otn-pub/java/jdk/8u77-b02/jdk-8u77-linux-x64.rpm"

Install it with rpm:

# rpm -ivh jdk-8u77-linux-x64.rpm

Ensure that it is working properly by checking it on your server:

# java -version

Install Elasticsearch

First, download and install the public signing key:

# rpm --import https://artifacts.elastic.co/GPG-KEY-elasticsearch

Next, create a file called elasticsearch.repo in /etc/yum.repos.d/, and paste the following lines:

[elasticsearch-5.x] name=Elasticsearch repository for 5.x packages baseurl=https://artifacts.elastic.co/packages/5.x/yum gpgcheck=1 gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch enabled=1 autorefresh=1 type=rpm-md

Now, the repository is ready for use. Install elasticsearch with yum code:

# yum install elasticsearch

Configuring Elasticsearch

Go to the configuration directory and edit the elasticsaerch.yml configuration file, like this:

# $EDITOR /etc/elasticsearch.yml

Enable memory lock removing comment on line 43:

bootstrap.memory_lock: true

Then, scroll until you reach the “Network” section, and there remove comment on lines:

network.host: 192.168.0.1 http.port: 9200

Save and exit.

Next, it’s time to configure memory lock. In /usr/lib/systemd/system/ edit elasticsearch.service. There, uncomment the line:

LimitMEMLOCK=infinity

Save and exit.

Now go to the configuration file for Elasticsearch:

# $EDITOR /etc/sysconfig/elasticsearch

Uncomment line 60 and be sure that it contains the following content:

MAX_LOCKED_MEMORY=unlimited

Now, Elastisearch is configured. It will run on the IP address you specified (change it to “localhost” if necessary) on port 9200. Next:

# systemctl daemon-reload # systemctl enable elasticsearch # systemctl start elasticsearch

Install Kibana

When Elasticsearch has been configured and started, install and configure Kibana with a web server. In this case, we will use Nginx.

As in the case of Elasticsearch, install Kibana with wget and rpm:

# wget https://artifacts.elastic.co/downloads/kibana/kibana-5.1.1-x86_64.rpm # rpm -ivh kibana-5.1.1-x86_64.rpm

Edit Kibana configuration file:

# $EDITOR /etc/kibana/kibana.yml

There, uncomment:

server.port: 5601 server.host: "localhost" elasticsearch.url: "http://localhost:9200"

Save, exit and start Kibana.

# systemctl enable kibana # systemctl start kibana

Now, install Nginx and configure it as reverse proxy. This way it’s possible to access Kibana from the public IP address.

Nginx is available in the Epel repository:

# yum -y install epel-release

Next:

# yum -y install nginx httpd-tools

In Nginx configuration file( /etc/nginx/nginx.conf) remove the server { } block. Then save and exit.

Create a Virtual Host configuration file:

# $EDITOR /etc/nginxconf.d/kibana.conf

There, paste the following content:

server {

listen 80;

server_name elk-stack.co;

auth_basic "Restricted Access";

auth_basic_user_file /etc/nginx/.kibana-user;

location / {

proxy_pass http://localhost:5601;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection 'upgrade';

proxy_set_header Host $host;

proxy_cache_bypass $http_upgrade;

}

}

Create a new authentication file:

# htpasswd -c /etc/nginx/.kibana-user admin my_strong_password

Lastly:

# systemctl enable nginx # systemctl start nginx

Install Logstash

As for Elastisearch and Kibana:

# wget https://artifacts.elastic.co/downloads/logstash/logstash-5.1.1.rpm # rpm -ivh logstash-5.1.1.rpm

It’s necessary to create a new SSL certificate. First, edit the openssl.cnf file:

# $EDITOR /etc/pki/tls/openssl.cnf

In the [ v3_ca ] section for the server identification:

[ v3_ca ] # Server IP Address subjectAltName = IP: IP_ADDRESS

After saving and exiting, generate the certificate:

# openssl req -config /etc/pki/tls/openssl.cnf -x509 -days 3650 -batch -nodes -newkey rsa:2048 -keyout /etc/pki/tls/private/logstash-forwarder.key -out /etc/pki/tls/certs/logstash-forwarder.crt

Next, you can create a new file to configure the log sources for Filebeat, then a file for syslog processing and the file to define the Elasticsearch output.

These configurations depends on how you want to filter the data.

Finally:

# systemctl enable logstash # systemctl start logstash

You have now successfully installed and configured the ELK Stack server-side!